News

MemoryS 2026 | Longsys Unveils SPU and iSA, Unlocking 8 Key Innovations in Edge AI Storage

2026.04.22

Shenzhen, March 27, 2026 – CFM | MemoryS 2026 kicked off grandly in Shenzhen, gathering global storage industry leaders to explore the transformation and future of the storage sector in the AI era. Mr. Huaibo Cai, Chairman and General Manager of Longsys, delivered a keynote speech titled Integrated Storage: Exploring Edge AI. Based on industry trends and Longsys’ technological accumulation, he shared the company’s core insights and comprehensive innovations in edge AI storage across positioning, business models, products and technologies, charting a new development path for the edge AI storage industry.

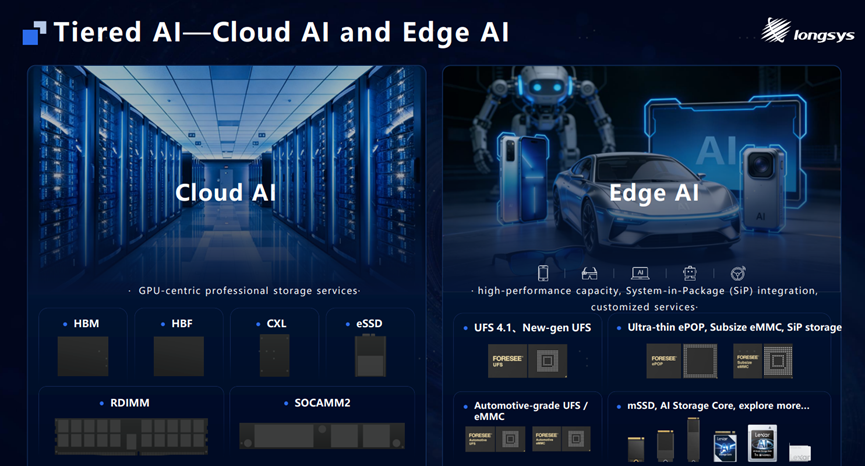

Highlight 1: Tiered AI Storage Demand, Building Full-Scenario Edge AI Storage Applications

Opening with the tiered development landscape of the AI industry, the speech clearly distinguished the core storage service differences between cloud and edge AI: Cloud AI focuses on GPU-centric professional storage services, while edge AI centers on three core demands—high-performance capacity, System-in-Package (SiP) integration, and customized services. Its storage requirements are fundamentally different from the legacy standard storage ecosystem. Just as consumer GPUs and AI-dedicated GPUs belong to distinct systems (the former relying on a general-purpose chip ecosystem and the latter built for complete AI system products), edge AI also demands deeply integrated customized storage solutions rather than off-the-shelf standard storage products.

With this precise positioning, Longsys focuses on integrated edge AI storage solutions that perfectly match diverse scenarios including AI smartphones, AI-assisted driving, AI wearables, AI PCs and embodied robots. This anchors a clear scenario-oriented direction for edge AI storage innovation and forms a complementary advantage with cloud AI storage.

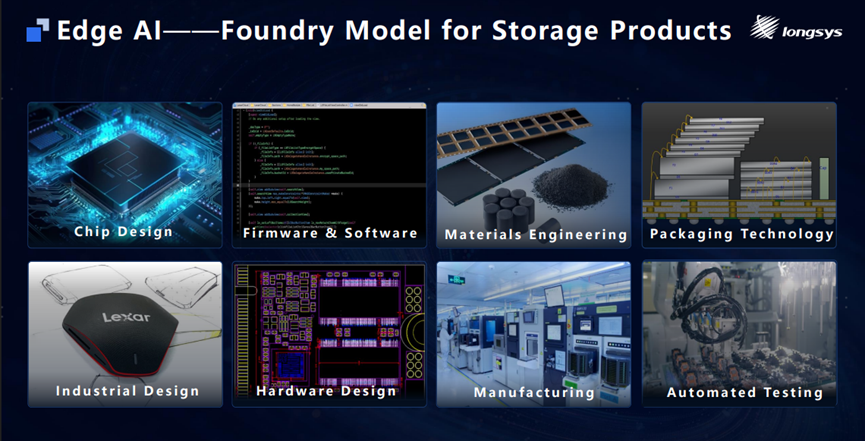

Highlight 2: Foundry Model for Edge AI Storage Products

To address the diversified and customized needs of edge AI storage, Longsys has established a full-link customized Foundry Model for edge AI storage, breaking through the single-upgrade bottleneck of traditional storage and achieving comprehensive performance improvements.

The model covers core industrial chain links including chip design, hardware design, firmware & software, packaging technology, industrial design, automated testing, materials engineering and manufacturing. Through in-depth collaboration, technology integration and capability openness across all links, it enables full-link customization and high-efficiency delivery of storage products, providing a new model reference for the edge AI storage industry and serving as Longsys’ core strategy for edge AI storage deployment.

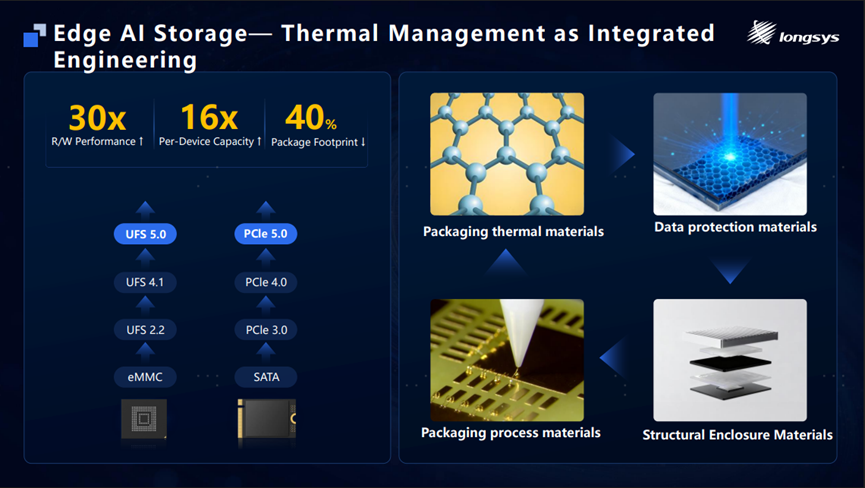

Highlight 3: Anchoring Comprehensive Materials and Thermal Management Engineering Capabilities

The development of edge AI storage heavily relies on materials engineering, with thermal interface materials standing as a core technical challenge that tests integrated capabilities.

From a technical roadmap perspective, embedded storage has evolved from eMMC to UFS 5.0, and SSDs from SATA to PCIe 5.0—all core upgrades target three goals: faster read/write performance, higher single-chip capacity, and smaller package sizes. Achieving these three upgrades relies on innovations in four key material engineering areas:

· Packaging thermal materials: Meet the heat dissipation demands of high-speed UFS and SSD products;

· Packaging process materials: Ensure the process performance and reliability of SiP products;

· Data protection materials: Guarantee stable data retention in X-ray and other radiation environments;

· Structural enclosure materials: Adopt three-proof and anti-EMI designs to solidify the material foundation of edge AI storage.

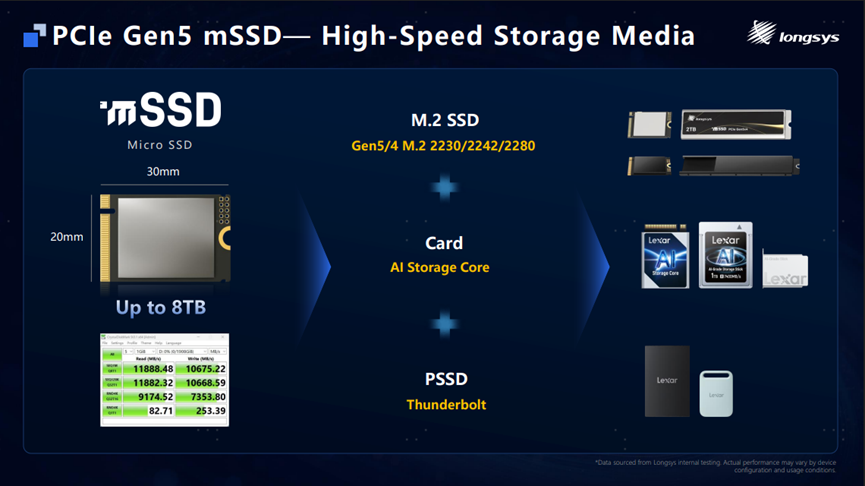

Highlight 4: PCIe Gen5 mSSD, Next-Generation High-Speed Storage Media

Backed by this technical direction and underlying capabilities, following the launch of the PCIe Gen4 mSSD in 2025, Longsys introduces its next-generation high-speed storage media—the PCIe Gen5 mSSD.

The product retains a DRAM-less design and ultra-compact 20×30mm form factor, is compatible with the M.2 2230 specification, and can be flexibly expanded into M.2 2242/2280, AI/solid-state memory cards, PSSDs and other multi-form specifications. Customers can adopt it directly without redesigning existing layouts, enabling comprehensive innovation in multi-form, high-performance and high-capacity storage.

Powered by the Maxio 1802 controller, the PCIe Gen5 mSSD delivers full upgrades: sequential read/write speeds up to 11GB/s and 10GB/s, random read/write performance up to 2200K and 1800K IOPS, and a maximum single-drive capacity of 8TB. Its features are precisely tailored to the high-speed, high-capacity storage needs of edge AI devices such as AI PCs.

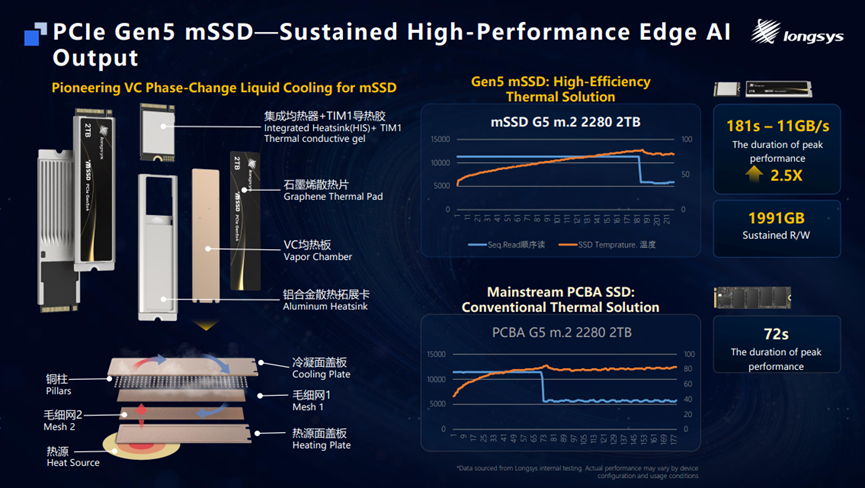

Highlight 5: High-Efficiency Thermal Solution for PCIe Gen5 mSSD, Ensuring Sustained High-Performance Edge AI Output

To solve the thermal pain points of the PCIe Gen5 mSSD under small-size, high-performance operation, Longsys has pioneered a dedicated high-efficiency thermal solution, applying VC phase-change liquid cooling to mSSDs for fast heat conduction and efficient dissipation.

The solution integrates multiple thermal components including a vapor chamber + TIM1 thermal gel, graphene heat sink, VC vapor chamber and aluminum alloy thermal expansion card. Test data shows significant advantages: compared with conventional thermal solutions, Longsys’ design extends the 11GB/s peak performance retention time to 181 seconds, with a continuous read capacity of 1991GB—nearly 2.5 times that of standard PCBA SSD thermal solutions.

Designed specifically for AI PC KV Cache high-load scenarios, it enables real-time Gen5 high-performance throughput while fitting the ultra-slim form factor of AI PCs, balancing high performance and device design requirements.

Highlight 6: SPU + iSA Breakthroughs in Edge AI Model Operation Bottlenecks

At the summit, Longsys made a blockbuster launch of the SPU (Storage Processing Unit) and iSA (Intelligence Storage Agent), forming a closed-loop software-hardware collaborative technology system for edge AI storage—“chip hardware + intelligent scheduling”.

Unlike conventional SSD controllers, the SPU is a dedicated processing unit built for intelligent storage architectures. Fabricated on a 5nm advanced process, it supports a maximum single-drive capacity of 128TB (far exceeding the 8TB maximum of mainstream current cSSDs, while high-capacity eSSD solutions carry high costs). The SPU effectively balances capacity and cost, serving as a cost-effective alternative to HDDs, opening new possibilities for customers exploring eSSD solutions and potentially significantly reducing total cost of ownership (TCO).

The SPU features two core capabilities: in-memory lossless compression (average 2:1 compression ratio, tested across text, code, database and other data types, greatly saving SSD capacity and costs) and HLC (High Level Cache) (offloads warm/cold data to SSDs, reducing DRAM requirements by nearly 40%).

As the “brain” of the SPU, the iSA is an intelligent scheduling engine for edge AI inference. It addresses challenges of MoE large models—huge parameter sizes, rapid KV Cache expansion, and I/O latency impacting inference smoothness—through MoE expert offloading, KV Cache intelligent management and intelligent prefetching algorithms, efficiently solving storage scheduling bottlenecks in edge AI inference.

Longsys has jointly optimized an intelligent agent host with AMD based on the Ryzen AI Max+ 395 processor, enabling local deployment of a 397B super-large model. In a 256K ultra-long context (122B) scenario, it reduces DRAM usage by nearly 40%, providing an innovative practical solution for efficient local deployment and large-scale application of super-large models. The two parties will further deepen collaborative innovation in the intelligent agent field by leveraging their respective technological and ecological advantages.

Highlight 7: HLC Technology Deployed Across All Edge Scenarios, Enabling DRAM Reduction and Cost Optimization

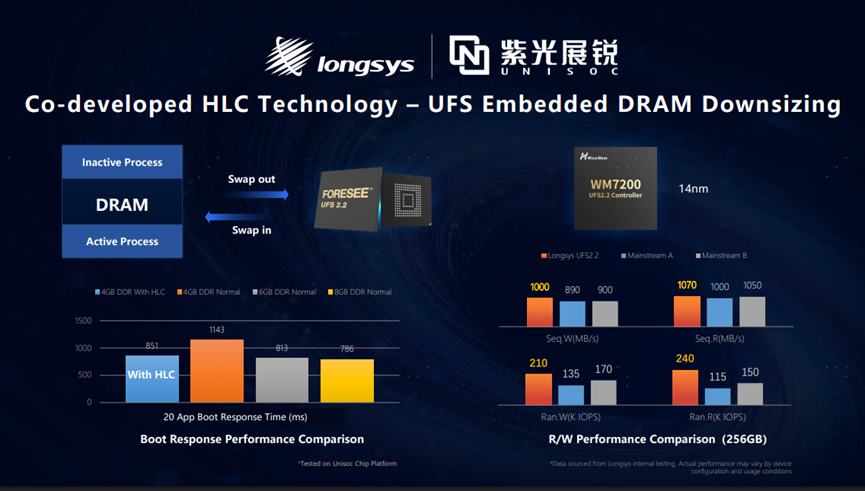

Longsys has deeply integrated HLC (High Level Cache) technology with SPU and UFS, achieving full-edge deployment across AI PC and embedded scenarios to solve the “performance-cost balance” challenge of edge devices.

· AI PC : HLC adopts a tiered design via the SPU—creating an AI-dedicated high-speed cache in the performance layer for large model expert/KV pair offloading, and handling OS and general data storage in the storage layer. Through high-priority read/write and low-priority I/O scheduling, it optimizes AI experience while cutting DRAM capacity needs and costs.

· Embedded : Co-developed with Unisoc and tested on Unisoc chip platforms, a 4GB DDR configuration paired with HLC technology delivers a 20-app launch response time of just 851ms, approaching the performance of 6GB/8GB DDR setups. Longsys’ UFS 2.2 product (equipped with the 14nm WM7200 controller) reaches sequential read/write speeds up to 1070MB/s and 1000MB/s, and random read/write IOPS up to 240K and 210K—surpassing industry mainstream levels. It effectively reduces terminal DRAM requirements and optimizes BOM costs while ensuring smooth user experience and device lifespan.

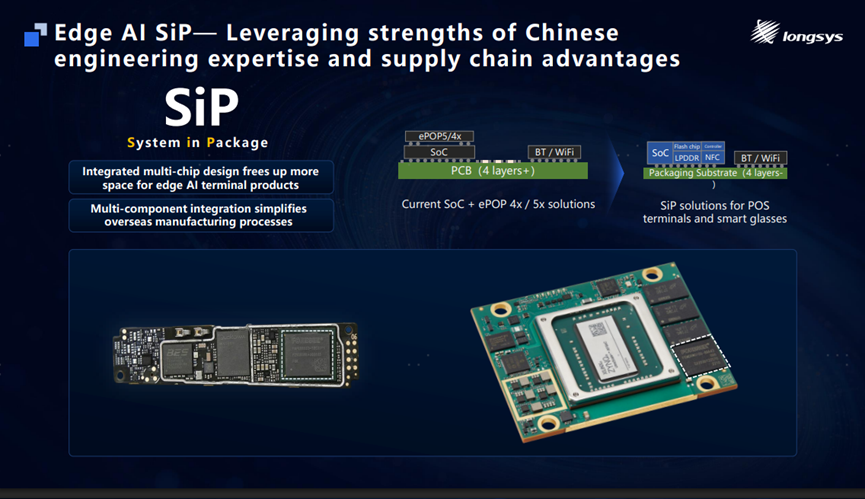

Highlight 8: Edge AI SiP Technology, Leveraging Chinese Engineering and Supply Chain Advantages

Leveraging the R&D strengths of Chinese engineers, Longsys has completed the full-flow design of SiP (System-in-Package), integrating multiple chips such as SoC, eMMC/UFS, LPDDR, Wi-Fi, Bluetooth and NFC into a single package.

For terminal products with stringent requirements on space, slimness and thermal management—such as AI glasses, smart watches and POS machines—this solution significantly reduces hardware size, optimizes structural layout and thermal performance, making it a highly competitive preferred option. Combined with core local supply chain capabilities, it maximizes space for edge AI products and translates SiP technological advantages into practical value for overseas manufacturing, greatly reducing overseas manufacturing complexity and adapting to product demands across global markets.

This summit presents Longsys’ full-dimensional layout in edge AI storage through eight core highlights. From precise positioning and model innovation to comprehensive materials engineering, product upgrades, technological closed-loops, scenario deployment, and the integration of local advantages with global operational capabilities, Longsys has built a complete edge AI storage solution centered on “integrated storage”.

Moving forward, Longsys will uphold the philosophy of Everything for Memory, continue to deepen the storage field, take technological innovation as its core driving force, and join hands with global industrial chain partners to jointly drive the innovative development of the edge AI industry.

we give our all

Phone:+86-13510641627

WeChat:Longsys_electronics

E-mail:marcom@longsys.com